7 Proven Digital Forensic Analysis Steps for Legal Evidence

Modern incidents don’t fail because security teams lack tools—they fail because the evidence wasn’t collected, preserved, or correlated in a way that survives audits, regulators, insurance reviews, or legal scrutiny.

A real breach investigation needs more than “we saw suspicious activity.” It needs:

- Forensic-grade telemetry (identity + app + infra + cloud + CI/CD)

- Chain-of-custody controls (who collected what, when, how it was protected)

- A defensible breach timeline reconstruction that can be repeated and verified

- A clear incident investigation workflow that produces legal-grade evidence

If you need expert help with an investigation or want to build a forensic-ready program, start here:

- Digital Forensic Analysis Services: https://www.pentesttesting.com/digital-forensic-analysis-services/

- Risk Assessment Services: https://www.pentesttesting.com/risk-assessment-services/

- Remediation Services: https://www.pentesttesting.com/remediation-services/

Why modern breaches require forensic-grade telemetry + chain-of-custody

Attackers increasingly blend into “normal” traffic: valid sessions, legitimate OAuth tokens, cloud console activity, CI/CD automation, and API calls that look routine—until you correlate them across systems.

That’s why digital forensic analysis must be evidence-driven:

- Telemetry proves what happened (and what didn’t)

- Chain-of-custody proves your evidence wasn’t altered

- Correlation turns isolated logs into a coherent narrative

Common investigation failures (and how to avoid them)

These are the repeat offenders we see when organizations struggle to prove impact:

- Missing timestamps / time drift (no NTP, mixed time zones, inconsistent formats)

- Altered logs (investigators “clean up” first, evidence later)

- Incomplete trace correlation (no request IDs, no session trails, no identity context)

- Short retention (cloud logs overwritten, CI/CD runs expired, SaaS audit logs not enabled)

- No evidence manifest (no hashes, no acquisition notes, no repeatable process)

The rest of this guide is a practical workflow to prevent those failures.

The 7-Step Incident Investigation Workflow (Evidence-First)

Step 1) Stabilize the incident without destroying evidence

Containment matters—but sloppy containment can erase the truth.

Do:

- Snapshot state (before “fixing”)

- Preserve logs and volatile data (where feasible)

- Document every action (who/what/when/why)

Avoid:

- Restarting services without capturing logs

- Reimaging hosts before collecting artifacts

- “Cleaning up suspicious files” before hashing/copying them

Quick “first actions” checklist (copy/paste)

[ ] Confirm scope: systems, identities, time window

[ ] Freeze retention risk: extend log retention NOW

[ ] Start a case log: every action timestamped (UTC)

[ ] Acquire evidence: copy, hash, store immutably

[ ] Only then: containment + remediation workstreamsStep 2) Create a case folder + chain-of-custody record

Start with a standard structure so the investigation stays repeatable.

Bash: create a case workspace (UTC-stamped)

CASE_ID="PTC-INC-$(date -u +%Y%m%d-%H%M%SZ)"

mkdir -p "$CASE_ID"/{notes,evidence,hashes,exports,timeline,report}

printf "Case: %s\nCreated(UTC): %s\nOwner: %s\n" \

"$CASE_ID" "$(date -u +%F\ %T)" "$USER" > "$CASE_ID/notes/case_log.txt"Template: chain-of-custody entry (append-only)

UTC Time: 2026-03-08 12:30:10

Collector: <name>

Source: <system/service>

Method: <export command / API / screenshot / snapshot>

Evidence ID: <file name or object key>

Hash: <sha256>

Storage: <WORM bucket / vault path>

Notes: <any relevant conditions>Step 3) Evidence acquisition strategy (web apps, APIs, cloud, CI/CD)

A strong forensic evidence collection plan pulls from four planes:

A) Web apps + APIs (application truth)

- Reverse proxy / CDN logs (edge truth)

- App logs (business logic truth)

- WAF/API gateway logs (control truth)

- Auth events (identity truth)

- Database audit logs (data truth)

B) Cloud workloads (infrastructure truth)

- Cloud audit logs (IAM, API calls)

- Object storage access logs

- VPC/flow logs (network truth)

- K8s audit logs (orchestrator truth)

C) CI/CD (supply chain truth)

- Build and deployment logs

- Artifact/signing logs

- Secrets access events (vault / env / runner logs)

D) Identity + SaaS (who did what)

- SSO/IdP sign-ins

- MFA events

- OAuth/consent events

- Role changes and token usage

Step 4) Acquire evidence safely + verify integrity with hashes

Hash everything (SHA-256) immediately after export

cd "$CASE_ID/evidence"

sha256sum * | tee "../hashes/sha256_manifest.txt"Python: build a JSON evidence manifest (audit-friendly)

import hashlib, json, os, time

from pathlib import Path

case_dir = Path(os.environ.get("CASE_DIR", "."))

evidence_dir = case_dir / "evidence"

manifest = {

"case_id": case_dir.name,

"generated_utc": time.strftime("%Y-%m-%dT%H:%M:%SZ", time.gmtime()),

"items": []

}

def sha256_file(path: Path) -> str:

h = hashlib.sha256()

with path.open("rb") as f:

for chunk in iter(lambda: f.read(1024 * 1024), b""):

h.update(chunk)

return h.hexdigest()

for p in sorted(evidence_dir.glob("**/*")):

if p.is_file():

manifest["items"].append({

"path": str(p.relative_to(case_dir)),

"bytes": p.stat().st_size,

"sha256": sha256_file(p),

})

(case_dir / "hashes" / "evidence_manifest.json").write_text(json.dumps(manifest, indent=2))

print("Wrote hashes/evidence_manifest.json")Best practice: store exported evidence in immutable/WORM storage (object lock or vault controls) and treat your working copy as non-authoritative.

Step 5) Timeline reconstruction using request IDs, session trails, and infra logs

If you can’t correlate events across systems, you’ll end up with “possible” instead of “proven.”

Add/standardize a request ID (app + proxy)

Nginx example (log request_id + upstream timing):

log_format forensic_json escape=json

'{ "ts":"$time_iso8601", "req_id":"$request_id", "ip":"$remote_addr", '

'"method":"$request_method", "uri":"$request_uri", "status":$status, '

'"bytes":$body_bytes_sent, "ref":"$http_referer", "ua":"$http_user_agent", '

'"rt":$request_time, "urt":"$upstream_response_time" }';

access_log /var/log/nginx/access_forensic.json forensic_json;Node.js (Express) example: enforce correlation ID + structured logs

import crypto from "crypto";

import pino from "pino";

const log = pino();

export function correlation(req, res, next) {

const incoming = req.header("x-request-id");

const reqId = incoming && incoming.length < 128 ? incoming : crypto.randomUUID();

req.reqId = reqId;

res.setHeader("x-request-id", reqId);

req.log = log.child({ req_id: reqId });

req.log.info({ path: req.path, method: req.method }, "request_start");

res.on("finish", () => {

req.log.info({ status: res.statusCode }, "request_end");

});

next();

}Python: merge multi-source logs into a single ordered timeline

This example expects JSONL exports (one JSON object per line) from proxy/app/cloud. It sorts by timestamp and groups by request/session identifiers.

import json, glob

from datetime import datetime, timezone

def parse_ts(s):

# Accept ISO-8601; adjust as needed for your environment

return datetime.fromisoformat(s.replace("Z", "+00:00")).astimezone(timezone.utc)

events = []

for fn in glob.glob("exports/*.jsonl"):

source = fn.split("/")[-1]

with open(fn, "r", encoding="utf-8") as f:

for line in f:

obj = json.loads(line)

ts = obj.get("ts") or obj.get("timestamp")

if not ts:

continue

obj["_ts"] = parse_ts(ts)

obj["_src"] = source

events.append(obj)

events.sort(key=lambda e: e["_ts"])

with open("timeline/timeline.csv", "w", encoding="utf-8") as out:

out.write("utc_time,source,req_id,session,user,event,details\n")

for e in events:

out.write(",".join([

e["_ts"].isoformat().replace("+00:00", "Z"),

e["_src"],

str(e.get("req_id","")),

str(e.get("session","")),

str(e.get("user","")),

str(e.get("event","")),

json.dumps(e, ensure_ascii=False)

]) + "\n")

print(f"Wrote {len(events)} events to timeline/timeline.csv")What this enables: “At 02:14:31Z the attacker authenticated, at 02:14:33Z elevated privileges, at 02:14:41Z exported data…”—not vague suspicions.

Step 6) Indicators of attacker activity (high-signal patterns)

In evidence-driven investigations, you’re hunting for actions that change capability:

- Privilege escalation: new roles, policy changes, admin grants

- Token reuse: same token/refresh token across different IPs/agents

- Lateral movement: unusual service-to-service calls, new API keys, new runners

- Persistence: new OAuth apps, scheduled jobs, new SSH keys, new forwarding rules

- Data access: abnormal query volume, exports, new storage presigned URLs, unusual downloads

Example: flag “impossible travel” sign-in pattern (simple logic)

# Conceptual: compare geo + time deltas from IdP audit exports

# If distance/time implies unrealistic travel, mark as suspicious.Example: detect token reuse across IPs (log correlation idea)

Group by (token_id OR session_id)

If count(distinct ip) > 1 within 10 minutes AND user_agent differs => investigateStep 7) Build incident reports that support legal, regulatory, and compliance needs

A strong report reads like a story backed by facts:

Recommended report structure (enterprise DFIR-friendly)

- Executive summary (impact, scope, status, next actions)

- Environment + scope (systems, identities, time window, assumptions)

- Evidence list (what was collected + hashes + locations)

- Breach timeline reconstruction (UTC, source-referenced)

- Attack narrative (initial access → escalation → objectives)

- Impact analysis (data accessed/changed, systems affected)

- Containment actions (what was done, by whom, when)

- Remediation plan (prioritized fixes, owners, deadlines)

- Appendices (queries, key logs, indicators, screenshots)

When you’re ready to move from findings to fixes, link the remediation plan directly to:

And when you need an independent assessment to validate controls post-incident:

Forensic readiness: prepare systems today so future incidents are fast to investigate

Forensic readiness is the difference between a 2-day timeline and a 2-month argument.

Minimum readiness controls (high ROI)

- Time sync everywhere: NTP enforced; logs normalized to UTC

- Structured logging: JSON logs with request/session/user identifiers

- Centralized log pipeline: app, proxy, cloud audit, CI/CD, IdP in one place

- Retention aligned to risk: don’t lose the week that matters

- Tamper-evidence: immutable storage + strict access controls

- Trace correlation: request IDs, trace IDs, session trails

“Add correlation now” checklist (web + API)

[ ] x-request-id enforced at edge

[ ] request_id logged in proxy + app logs

[ ] auth events include user_id + session_id

[ ] admin actions include actor_id + change details

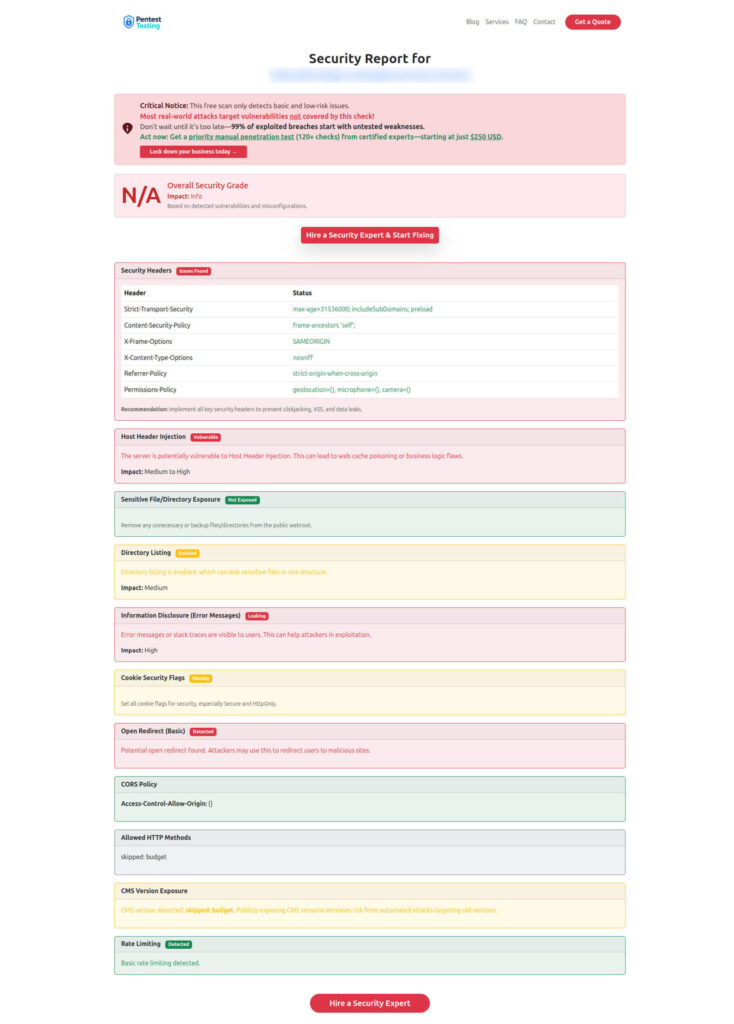

[ ] token issuance / refresh events capturedUse our free tool to baseline web exposure (screenshots)

Even though digital forensic analysis is incident-driven, prevention and readiness start with visibility. A fast baseline scan can help you spot common web misconfigurations that often become “evidence gaps” during incidents.

Free Website Vulnerability Scanner tool Dashboard

Sample assessment report to check Website Vulnerability

Related reading (recent posts on our blog)

If you’re building an evidence-first program, these recent guides pair well with this incident investigation workflow:

- https://www.pentesttesting.com/forensic-readiness-smb-log-retention/

- https://www.pentesttesting.com/adaptive-webhook-security-best-practices/

- https://www.pentesttesting.com/api-logic-abuse-detection-risk-scoring/

Next steps

- Start a Risk Assessment: https://www.pentesttesting.com/risk-assessment-services/

- Fix gaps fast (Remediation): https://www.pentesttesting.com/remediation-services/

- Get DFIR help: https://www.pentesttesting.com/digital-forensic-analysis-services/

🔐 Frequently Asked Questions (FAQs)

Find answers to commonly asked questions about Digital Forensic Analysis + Evidence Collection.