9 Proven API Abuse Detection Plays WAFs Miss

Traditional WAFs and flat rate limits are great at blocking known bad patterns. But API abuse detection is a different game: attackers can look “normal” per request while quietly draining value through API logic abuse, sequence manipulation, and downstream resource exhaustion.

This guide shows practical, production-ready detection signals and response tactics you can implement today—without turning your API into a CAPTCHA maze.

Want an expert, end-to-end validation of your API controls (authz, abuse, logic, data exposure)? Start here:

API Penetration Testing: https://www.pentesttesting.com/risk-assessment-services/

(Or go direct to API testing services: https://www.pentesttesting.com/api-pentest-testing-services/)

1) Modern API abuse patterns that evade WAFs

Business logic abuse (high impact, low volume)

This is the “the request is valid, but the intent is hostile” category:

- promo/coupon enumeration

- inventory/cart hoarding

- OTP/email/SMS spam through legitimate flows

- scraping proprietary data via allowed endpoints

- “low-and-slow” account takeover patterns (many accounts, low frequency each)

Chained endpoints + sequence manipulation

WAFs usually inspect a request in isolation. Abuse often lives in the sequence:

- login → token refresh → export loops

- password reset endpoints used as an oracle (existence checks)

- browse/search patterns that mimic users but at machine precision

Indirect amplification (resource exhaustion without “high traffic”)

One request can fan out into many expensive operations:

- heavy search endpoints (DB + cache misses)

- report generation

- export endpoints

- image/PDF rendering

- webhook retries that multiply

Parameter ambiguity and pollution

Attackers exploit parser differences:

- duplicate keys (

id=1&id=2) - mixed content-type parsing edge cases

- nested parameters that hit different code paths

The defensive lesson: API abuse detection must be stateful and cost-aware.

2) Why traditional WAF signatures and thresholds fall short

Stateless rules vs. stateful abuse:

WAFs are strongest when a single request is obviously malicious. API abuse is frequently “clean requests” arranged into harmful behavior.

Per-IP rate limits are easy to route around:

- NAT pools, mobile networks, rotating proxies, distributed fleets

- abuse spread across accounts/tokens (not IPs)

Thresholds ignore business cost:

“100 requests/min” means nothing if 5 requests trigger 10,000 DB reads.

WAFs don’t see downstream signals:

- DB timeouts, queue depth, cache miss storms

- application error codes that indicate enumeration or automation

3) The detection signals that actually matter

Below are high-signal indicators for API abuse detection that won’t feel like keyword stuffing in alerts.

Signal A — Entropy shifts (mutation, guessing, automation)

Humans are patterned. Bots are “systematic” or “random” in a different way.

Practical approach: measure entropy of:

- parameter values (IDs, coupons, emails) per principal

- endpoint selection over a time window

- error code distribution (e.g., lots of 404/422/401 bursts)

Python: entropy helper

import math

from collections import Counter

def shannon_entropy(values):

if not values:

return 0.0

c = Counter(values)

total = len(values)

return -sum((n/total) * math.log2(n/total) for n in c.values())

# Example usage:

# entropy = shannon_entropy(["A12", "A13", "A14", "A15", "A16"]) # typically lower, sequential

# entropy = shannon_entropy(["X9Q", "P1Z", "0AA", "K7M", "Z2Z"]) # typically higher, randomSignal B — Unusual endpoint sequences (stateful patterns)

Sequence matters more than volume.

Quick win: track the last N endpoint classes per session/user and alert on improbable chains:

login → export → export → exportpassword_reset → password_reset → password_resetsearch → search → searchat machine-like intervals

Simple n-gram scoring (starter pattern)

from collections import defaultdict, Counter

# Train on “normal” sequences: store n-gram counts per endpoint class

ngram_counts = Counter()

prefix_counts = Counter()

def update_model(seq, n=3):

for i in range(len(seq)-n+1):

ngram = tuple(seq[i:i+n])

prefix = ngram[:-1]

ngram_counts[ngram] += 1

prefix_counts[prefix] += 1

def score_next(prefix, nxt):

# Lower probability => more suspicious

prefix = tuple(prefix)

ngram = prefix + (nxt,)

if prefix_counts[prefix] == 0:

return 0.0

return ngram_counts[ngram] / prefix_counts[prefix]

# If score is near 0 for an observed prefix->next transition, flag for review/throttleSignal C — Downstream errors and latency “echo”

Abuse often causes:

- DB timeouts

- queue backlog

- cache churn

- 429 spikes (your own controls firing)

This is where WAF-only programs miss the story.

Rule of thumb: alert on “behavior + downstream stress” correlation, not either alone.

Signal D — Principal-based concurrency

Bots parallelize. Humans don’t.

Track concurrent in-flight requests per:

- API key

- user ID

- session ID

- device fingerprint (even a lightweight one)

4) Real-time tooling patterns (stateful + adaptive)

A practical architecture for modern API security monitoring:

Edge (WAF) → API gateway → App middleware → Stateful store (Redis) → Logs/SIEM

4.1 Structured logging (non-negotiable for detection + DFIR)

Emit one JSON record per request with stable identifiers.

Example JSON log schema

{

"ts": "2026-02-22T10:15:12.012Z",

"request_id": "req_2f3c9f4a",

"trace_id": "4b3b0c1c2f2b1a9e",

"client_ip": "203.0.113.10",

"principal": {"type":"api_key","id":"key_8d2"},

"session_id": "sess_b91",

"method": "GET",

"path": "/v1/export",

"endpoint_class": "export",

"status": 200,

"latency_ms": 842,

"bytes_in": 412,

"bytes_out": 98123,

"auth": {"result":"ok"},

"risk_score": 62,

"action": "throttle",

"reason_codes": ["seq_anomaly","high_concurrency"]

}4.2 Express/Node middleware: risk score + adaptive throttling

This is a real-world pattern you can ship quickly.

import crypto from "crypto";

import Redis from "ioredis";

const redis = new Redis(process.env.REDIS_URL);

function requestId(req) {

return req.headers["x-request-id"] || "req_" + crypto.randomBytes(8).toString("hex");

}

function endpointClass(path) {

if (path.startsWith("/v1/login")) return "login";

if (path.startsWith("/v1/otp")) return "otp";

if (path.startsWith("/v1/export")) return "export";

if (path.startsWith("/v1/search")) return "search";

return "public_read";

}

function computeRisk(ctx) {

let s = 0;

if (!ctx.authenticated) s += 15;

if (ctx.authFailRatio > 0.4) s += 25;

if (ctx.sessionAgeSec < 30) s += 10;

s += ctx.endpointRisk;

if (ctx.concurrent > 8) s += 20;

return Math.min(s, 100);

}

function decision(score) {

if (score < 25) return "allow";

if (score < 55) return "throttle";

if (score < 80) return "challenge";

return "block";

}

function retryAfterSeconds(score) {

const s = Math.max(0, Math.min(score, 100));

return Math.ceil(1 + (s / 100) * 59); // 1..60

}

export async function abuseGuard(req, res, next) {

const rid = requestId(req);

res.setHeader("X-Request-Id", rid);

const principal = req.headers["x-api-key"] ? `key:${req.headers["x-api-key"].slice(0, 12)}` : `ip:${req.ip}`;

const cls = endpointClass(req.path);

// Minimal state keys

const concKey = `conc:${principal}`;

const failKey = `fails:${principal}`;

const sessKey = `sess:${principal}`;

// Track concurrency (incr before, decr after)

const concurrent = await redis.incr(concKey);

await redis.expire(concKey, 60);

const start = Date.now();

res.on("finish", async () => {

await redis.decr(concKey);

// Track auth failures (example)

if (res.statusCode === 401) {

await redis.incr(failKey);

await redis.expire(failKey, 300);

}

// Store last seen timestamp (session age approximation)

await redis.set(sessKey, String(Date.now()), "EX", 3600);

const latency = Date.now() - start;

console.log(JSON.stringify({

ts: new Date().toISOString(),

request_id: rid,

principal,

path: req.path,

endpoint_class: cls,

status: res.statusCode,

latency_ms: latency

}));

});

// Read state for scoring

const fails = parseInt((await redis.get(failKey)) || "0", 10);

const lastSeen = parseInt((await redis.get(sessKey)) || "0", 10);

const sessionAgeSec = lastSeen ? (Date.now() - lastSeen) / 1000 : 999999;

const endpointRisk = ({ login:25, otp:30, export:20, search:10, public_read:0 }[cls] ?? 5);

const authFailRatio = fails / Math.max(1, fails + 10); // starter heuristic

const score = computeRisk({

authenticated: Boolean(req.user),

authFailRatio,

sessionAgeSec,

endpointRisk,

concurrent

});

const act = decision(score);

if (act === "allow") return next();

if (act === "throttle") {

const ra = retryAfterSeconds(score);

res.setHeader("Retry-After", String(ra));

return res.status(429).json({ error: "Too Many Requests", request_id: rid });

}

if (act === "challenge") {

// Keep it simple: step-up to re-auth / stronger token / mTLS for B2B

return res.status(401).json({ error: "Step-up required", request_id: rid });

}

return res.status(403).json({ error: "Blocked", request_id: rid });

}4.3 Atomic token bucket (Redis Lua) for cost-based enforcement

Cost-based throttling stops expensive endpoints from being abused “within limits”.

-- token_bucket.lua

-- KEYS[1] = bucket key

-- ARGV[1] = now_ms

-- ARGV[2] = refill_rate_per_ms

-- ARGV[3] = capacity

-- ARGV[4] = cost

local key = KEYS[1]

local now = tonumber(ARGV[1])

local rate = tonumber(ARGV[2])

local cap = tonumber(ARGV[3])

local cost = tonumber(ARGV[4])

local data = redis.call("HMGET", key, "tokens", "ts")

local tokens = tonumber(data[1]) or cap

local ts = tonumber(data[2]) or now

-- refill

local delta = math.max(0, now - ts)

tokens = math.min(cap, tokens + delta * rate)

local allowed = 0

if tokens >= cost then

tokens = tokens - cost

allowed = 1

end

redis.call("HMSET", key, "tokens", tokens, "ts", now)

redis.call("PEXPIRE", key, 60000)

return allowedCaller-side idea: compute cost per request using endpoint class + risk score:

function requestCost(endpointClass, score) {

const base = { public_read:1, search:2, login:5, otp:6, export:8, payment:10 }[endpointClass] ?? 2;

const extra = Math.floor(score / 20); // 0..5

return base + extra;

}4.4 FastAPI (Python) middleware variant

from fastapi import FastAPI, Request

from fastapi.responses import JSONResponse

import time, secrets

import redis

r = redis.Redis.from_url("redis://localhost:6379/0")

app = FastAPI()

def endpoint_class(path: str) -> str:

if path.startswith("/v1/login"): return "login"

if path.startswith("/v1/otp"): return "otp"

if path.startswith("/v1/export"): return "export"

if path.startswith("/v1/search"): return "search"

return "public_read"

@app.middleware("http")

async def abuse_guard(request: Request, call_next):

rid = request.headers.get("x-request-id") or f"req_{secrets.token_hex(8)}"

cls = endpoint_class(request.url.path)

principal = request.headers.get("x-api-key", request.client.host)[:16]

conc_key = f"conc:{principal}"

concurrent = r.incr(conc_key)

r.expire(conc_key, 60)

start = time.time()

try:

# Simple starter rule: block abnormal concurrency on expensive endpoints

if cls in {"export","otp","login"} and concurrent > 12:

return JSONResponse({"error":"Too Many Requests","request_id":rid}, status_code=429, headers={"X-Request-Id": rid})

response = await call_next(request)

return response

finally:

r.decr(conc_key)

latency_ms = int((time.time() - start) * 1000)

# log JSON to stdout / collector

print({

"ts": time.strftime("%Y-%m-%dT%H:%M:%SZ", time.gmtime()),

"request_id": rid,

"principal": principal,

"path": request.url.path,

"endpoint_class": cls,

"latency_ms": latency_ms

})5) Response playbook: throttle → challenge → contain

For API abuse response playbooks, aim for progressive friction:

Step 1 — Dynamic throttling (fastest, least disruptive)

- return 429 with

Retry-After - ramp backoff based on risk score and endpoint cost

- prefer principal-based throttles (API key/user/session), not IP-only

Step 2 — Challenge escalation (only on risk)

Use step-up controls selectively:

- re-authentication for sensitive flows

- short-lived tokens

- mTLS for B2B API clients (where feasible)

- “proof token” bound to session (avoid permanent friction)

Step 3 — Containment and “downstream protection”

When you detect high-confidence abuse:

- freeze/rotate the abused API key

- temporarily disable high-cost endpoints for that principal

- route suspicious traffic to a minimal handler that returns safe errors quickly

- protect dependencies (DB, queues) with circuit breakers

Node: cheap-fail for abusive principals

const deny = new Set(); // load from Redis in real life

export function denylistGuard(req, res, next) {

const principal = req.headers["x-api-key"] ? `key:${req.headers["x-api-key"].slice(0, 12)}` : `ip:${req.ip}`;

if (deny.has(principal)) {

// Cheap response, preserve request_id for DFIR correlation

const rid = req.headers["x-request-id"] || "req_" + crypto.randomBytes(8).toString("hex");

res.set("X-Request-Id", rid);

return res.status(403).json({ error: "Blocked", request_id: rid });

}

return next();

}6) Logging for incident readiness (DFIR-friendly)

If you want API abuse detection to hold up during real incidents, log for reconstruction:

Minimum fields to capture:

request_id,trace_id, timestamp (UTC), service name- principal identifiers:

user_id,api_key_id,session_id - endpoint + method + status

- latency, bytes in/out, downstream timing (DB/queue)

- decision + reason codes (why throttled/blocked)

- auth outcomes (success/fail type), token refresh events

When an incident hits, your DFIR team should be able to answer:

- What happened first?

- Which principal did what?

- What changed after we deployed controls?

Need DFIR support or logging readiness validation?

DFIR services: https://www.pentesttesting.com/digital-forensic-analysis-services/

Remediation + hardening: https://www.pentesttesting.com/remediation-services/

SQL: timeline reconstruction (example)

SELECT

ts, request_id, principal_id, path, status, latency_ms, action, reason_codes

FROM api_gateway_logs

WHERE principal_id = 'key_8d2'

AND ts BETWEEN '2026-02-22 00:00:00' AND '2026-02-22 23:59:59'

ORDER BY ts ASC;7) Case example: fix pattern + measurable outcome

Problem: An API saw recurring spikes in DB CPU and “random” timeouts. WAF alerts looked normal, and IP-based rate limits weren’t triggering.

Findings (behavioral):

- high concurrency per API key on

/exportand/search - endpoint chain anomalies (

search → export) repeating at machine intervals - entropy shifts in search filters (systematic mutation)

Controls deployed:

- cost-based token bucket per principal for expensive endpoints

- sequence anomaly scoring in middleware → throttle/challenge

- cheaper failure mode for high-confidence abuse

Measured outcome (within 72 hours):

- large drop in downstream timeouts (5xx)

- DB CPU stabilized during peak

- abuse traffic contained without impacting normal users (minimal false positives)

Traffic graph suggestion (before/after):

- Graph 1:

requests/minvs429/min - Graph 2:

db_time_ms p95before/after - Graph 3:

export endpoint latency p95before/after

(If you want, your engineering team can generate these from gateway logs and APM metrics.)

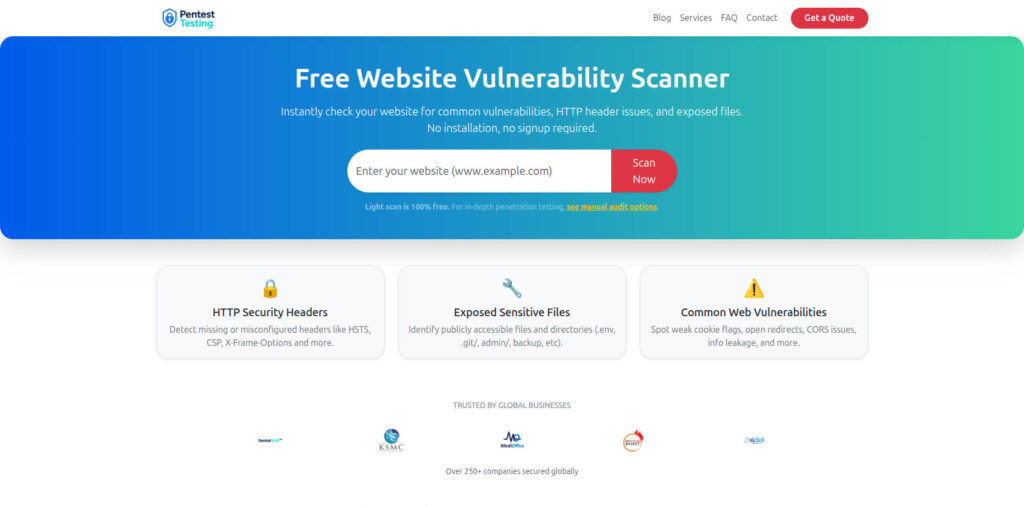

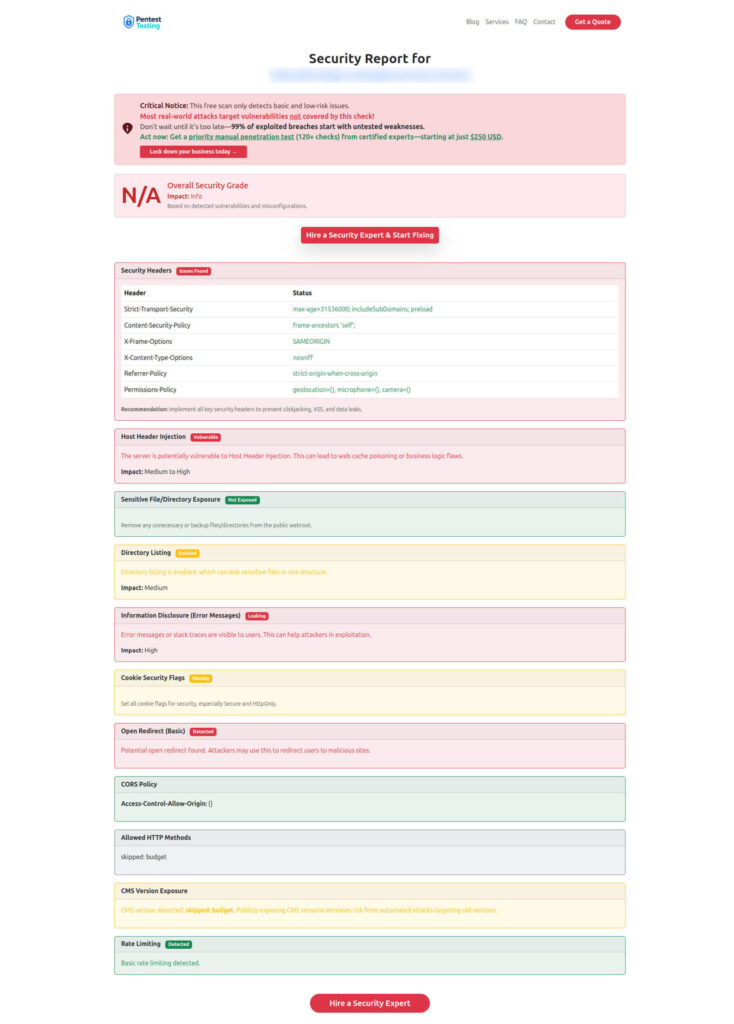

8) Free tool + Sample report)

Free Website Vulnerability Scanner tool Dashboard

Link (Free Tool): https://free.pentesttesting.com/

Sample report to check Website Vulnerability

Practical next steps

If you want a professional assessment and an implementation roadmap:

- API penetration testing / risk assessment: https://www.pentesttesting.com/risk-assessment-services/

- API testing services: https://www.pentesttesting.com/api-pentest-testing-services/

- Remediation services: https://www.pentesttesting.com/remediation-services/

- DFIR + logging readiness: https://www.pentesttesting.com/digital-forensic-analysis-services/

Related recent reads from our blog

- 7 Powerful Risk-Driven API Throttling Tactics

- 9 Powerful Webhook Security Patterns That Stop Breaches

- 7 Powerful Forensic Readiness Steps for SMBs

- 7 Powerful Endpoint Deception Strategies to Contain Breaches

🔐 Frequently Asked Questions (FAQs)

Find answers to commonly asked questions about API Abuse Detection Plays.